Densifying Sparse Ocean Depth Observations¶

DepthDif explores conditional diffusion for reconstructing dense subsurface ocean temperature fields from sparse, masked observations.

The repository currently supports:

- EO-conditioned multi-band reconstruction (surface condition + deeper target bands)

- cross-source conditioning where EO surface SST can come from OSTIA while deeper targets remain Copernicus reanalysis

- public PyPI inference through the depth-recon package, including no-GLORYS ARGO/OSTIA week exports

- latent diffusion workflow with autoencoder-based depth compression (see Autoencoder)

Project Links¶

Analysis

Explore DepthDif visualizations

Open the analysis landing page for the one-week globe, temporal globe, spatial error dashboard, and temporal error dashboard.

Model Description¶

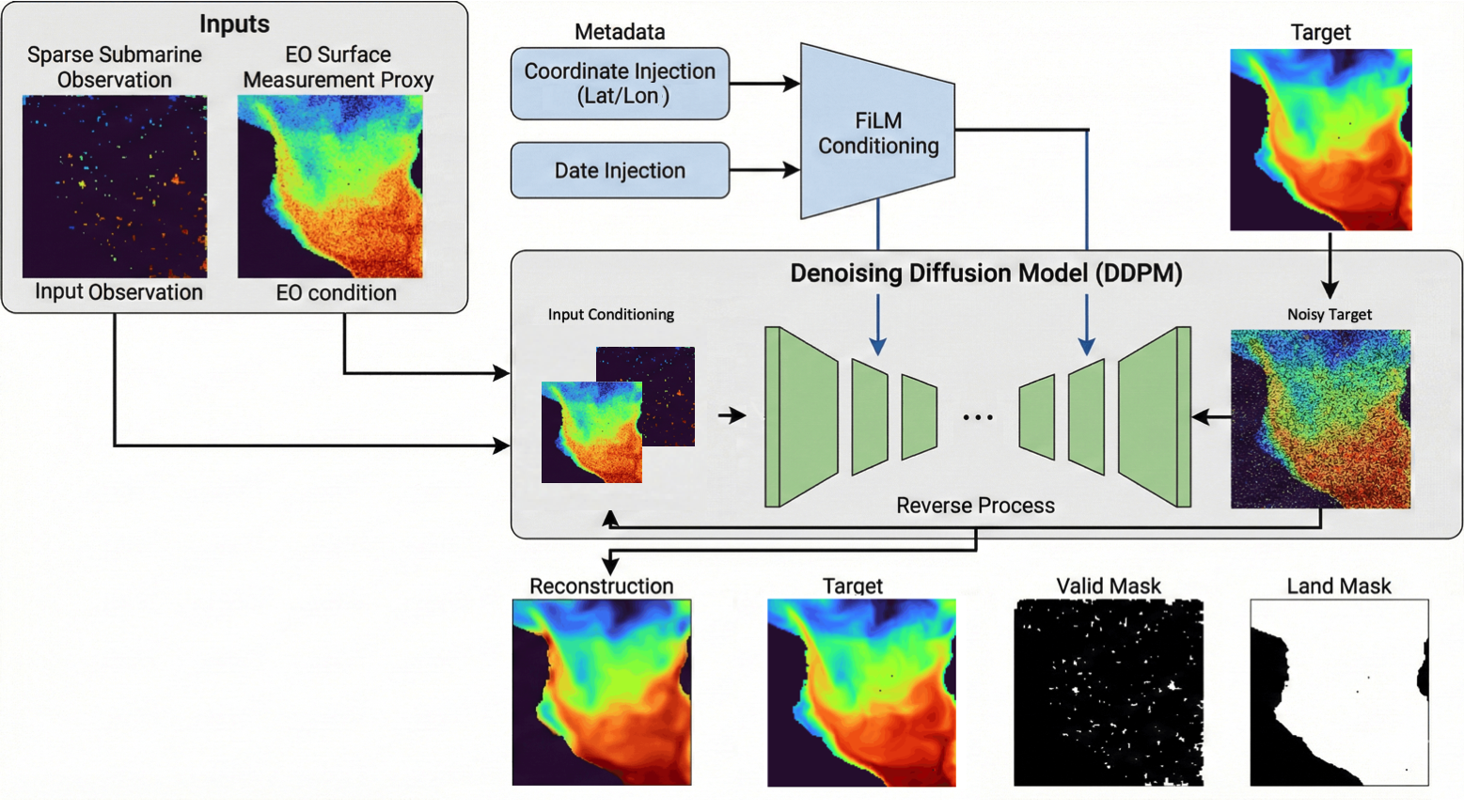

DepthDif is a conditional diffusion model: it reconstructs dense GLORYS depth fields from sparse ARGO profile observations, conditioned on scenario-selected surface EO context (OSTIA SST for temperature/joint, SSS sos for salinity), ARGO observation support, GLORYS spatial support, plus coordinate/date context. See the full model details in Model.

In the GeoTIFF training workflow, EO surface conditioning comes from the scenario-selected surface raster, subsurface targets come from GLORYS, and sparse inputs come from ARGO/EN4 profiles after depth alignment. Salinity is a scenario-selected field: --scenario salinity trains salinity only, and --scenario joint trains temperature and salinity together.

Ambient diffusion (short): at step t, x_t = sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon, epsilon ~ N(0, I).

For ambient-occlusion training with observed mask m and further-corrupted mask m' <= m, optimize

L on the original x support intersected with valid target support and GLORYS spatial support (x_valid_mask ∩ y_valid_mask ∩ land_mask) while conditioning on the stronger corruption m'.

Documentation Map¶

- Quick Start: environment setup + fastest train/infer path

- Production Datasets: OSTIA L4 + EN4 profile dataset specs and download workflows

- Data Overview: high-level modalities, variables, shared axes, and cadence

- Data Source: source product and raw variable tables

- Data Export: GeoTIFF dataset layout, quantization, and export command

- Dataset Statistics: measured ARGO, raster, patch, and overlap counts

- Data Contract: model-facing tensor shapes, masks, and normalization

- Model: architecture and diffusion conditioning flow

- Temporal Dimension Ideas: options and tradeoffs for extending from

B,C,H,WtoB,T,C,H,Won real dataset windows - Autoencoder + Latent Diffusion: AE architecture, latent task setup, launch commands, and constraints

- Data + Coordinate Injection: coordinate/date FiLM conditioning details

- Training: CLI usage, run outputs, logging, checkpoints

- Inference: public API, global export, script, and direct

predict_stepworkflows - Public Inference Package:

depth-reconinstall, API, CLI, asset resolution, and outputs - FUll settings documentation: per-file config keys, defaults, and explanations

- Sampling Diagnostics: denoising intermediates, MAE-vs-step, and schedule profiling

- Experiments: qualitative test results

- Model Settings: key config knobs, runtime mapping, and full settings reference

- Development: known issues, TODOs, and roadmap

- API Reference: auto-generated module reference via

mkdocstrings